From the 1990s to the early 2000s, the phrase 'digital divide' saw its popularity peak. Now that less is heard of it, has the divide been bridged forever? The issue of access seems not to be as big a problem now as it appeared back then. With smartphone technology, the availability of cheap consumer electronics, and improving wireless technology, getting more people online seems only like a matter of waiting. If the present trend continues, it won't take very long before almost everybody on the planet becomes a part of this massive network. The question that's now beginning to be asked is whether merely having access to the technology means having bridged the divide, or if we should be concerned about new technological divides based on differences in patterns of access, gains in productivity, improvements in the quality of life, and the way in which modern communication promotes desirable societal and political goals?

One of the world's first fully functioning computers was developed in 1941 by a German inventor named Konrad Zuse, who also described Plankalkül, considered the forerunner of modern programming languages. His work went unnoticed for a long time in the non-German-speaking parts of the world. Had it been otherwise, the language of computing could possibly have been German rather than English. As it turned out, many of the more widely recognised and disseminated advances in computing came from the English-speaking world. English had other advantages too. At the dawn of the computer age, English had already been introduced to vast stretches of the world by the British Empire. It was then helped by the United States becoming the dominant global centre of economy and culture after the Second World War and the Cold War. The end of the Cold War opened a global market for the likes of MTV and CNN, and with them the English language. However, when it comes to the proliferation of English, nothing comes close to the immense spread, rate of adoption and effect of the internet.*

The internet as we know it today is largely an American phenomenon. Our daily online needs are served almost exclusively by US internet giants based in the Silicon Valley: Google, Facebook, Twitter, Youtube, Dropbox, Amazon, Ebay and more. As a result, the internet's design and evolution has been shaped by Western democratic values. We'd likely not have the internet in its relatively unstructured and decentralised current form had it come out of Soviet Russia. But with those values also came the language – English. The American Standards Association's original ASCII code, the dominant encoding scheme of the web until a few years ago, uses only 128 characters to represent all textual information necessary for a computer, to the exclusion of characters alien to English. A German equivalent, if Germans had got the lead, for instance, would certainly have accommodated accented characters. Still, regardless of which Western culture computing advances might have come from, for Southasia and other regions with non-Latin alphabets computing would still have had to be done in a foreign language and alphabet, or in unintuitive versions of their own languages.

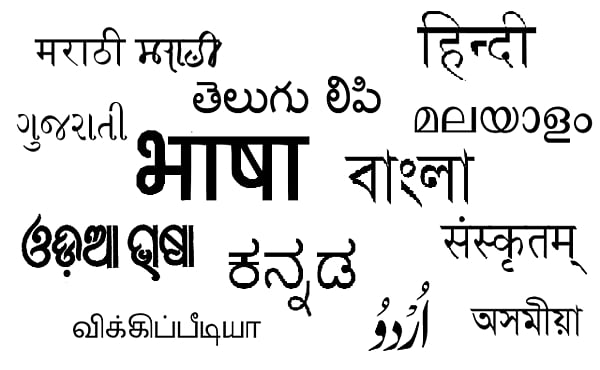

The UTF-8 (more commonly known as Unicode), popularised in the last decade, has transcended the limitations of ASCII to represent thousands of characters with a single encoding scheme. This has made it possible to represent many different writing systems using one encoding scheme, instead of having to use separate ones for each. Today, this is the most popular standard of character encoding on the web.